Give your agentic chatbots a fast and reliable long-term memory

When scaling conversational agents, the data layer design often determines success or failure. To support millions of users, agents need conversational continuity—the ability to maintain responsive chats while preserving the deep context that backend models require to remain coherent.

In this article, we explore how to use Google Cloud solutions to solve two primary data challenges in AI: fast context updates for real-time interaction and efficient retrieval for long-term history. We’ll dive into a polyglot approach using Redis, Bigtable, and BigQuery that ensures your agent retains detail from recent interactions to months-old archives.

The Polyglot Storage Strategy

What is a Polyglot Approach?

A polyglot approach uses a multi-tiered storage strategy, leveraging specialized data services rather than a single database to manage different data lifecycles. By matching the “temperature” and volume of data to the right tool—such as in-memory caches for speed and data warehouses for analytics—you optimize both performance and cost.

Defining the Memory Tier on Google Cloud

To maintain continuity, we categorize memory into three distinct tiers:

- Short-term: Sub-millisecond “hot” context retrieval.

- Mid-term: Petabyte-scale durable system of record.

- Long-term: Archival and analytical insights.

1. Short-Term Memory: Memorystore for Redis

Users expect chat histories to load instantaneously. For the immediate context of a conversation, Memorystore for Redis serves as the primary cache.

- The Benefit: As a fully managed in-memory store, it provides the sub-millisecond latency required for a natural conversational flow.

- The Implementation: Since chat sessions are incrementally growing lists, we use Redis Lists. By utilizing the native RPUSH command, the application transmits only the newest message, avoiding the network-heavy “read-modify-write” cycles common in simpler stores.

2. Mid-Term Memory: Cloud Bigtable

As conversations grow, agentic applications need a durable, scalable home for history. Cloud Bigtable acts as the definitive system of record.

- The Scale: Bigtable is a NoSQL database designed for high-velocity, write-heavy workloads—perfect for millions of simultaneous interactions.

- Storage Optimization: Keep your active cluster lean by implementing garbage collection policies (e.g., retaining only the last 60 days in the high-performance tier).

- Key Strategy: Use a user_id#session_id#reverse_timestamp pattern. This co-locates messages from a single session, allowing for efficient range scans to retrieve history reloads quickly.

3. Long-Term Memory & Analytics: BigQuery

For archival and intelligence, data moves to BigQuery. While Bigtable serves the live app, BigQuery is designed for complex SQL queries at scale.

- The Feedback Loop: This operational data becomes a goldmine for improving user experience. You can run sentiment analysis or trend reporting without impacting the performance of the user-facing chat components.

4. Artifact Storage: Cloud Storage (GCS)

Unstructured data—like images uploaded for analysis or AI-generated documents—lives in Cloud Storage.

- Pointer Strategy: Redis and Bigtable records store a URI pointer (e.g., gs://bucket/file) rather than the raw binary data.

- Security: Serve files using signed URLs, providing time-limited access to the client without exposing your buckets publicly.

A hybrid sync-async strategy for optimal flow of data

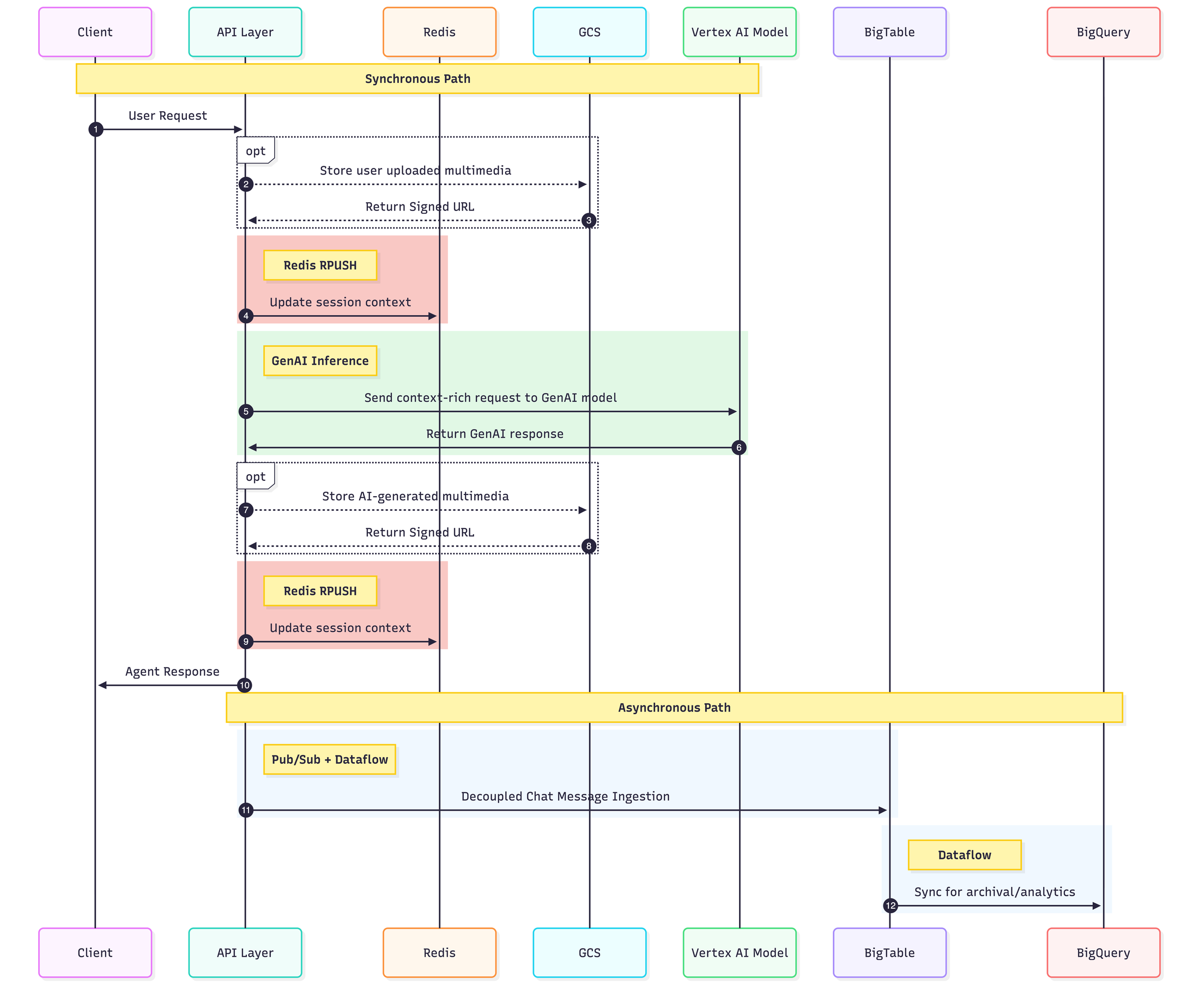

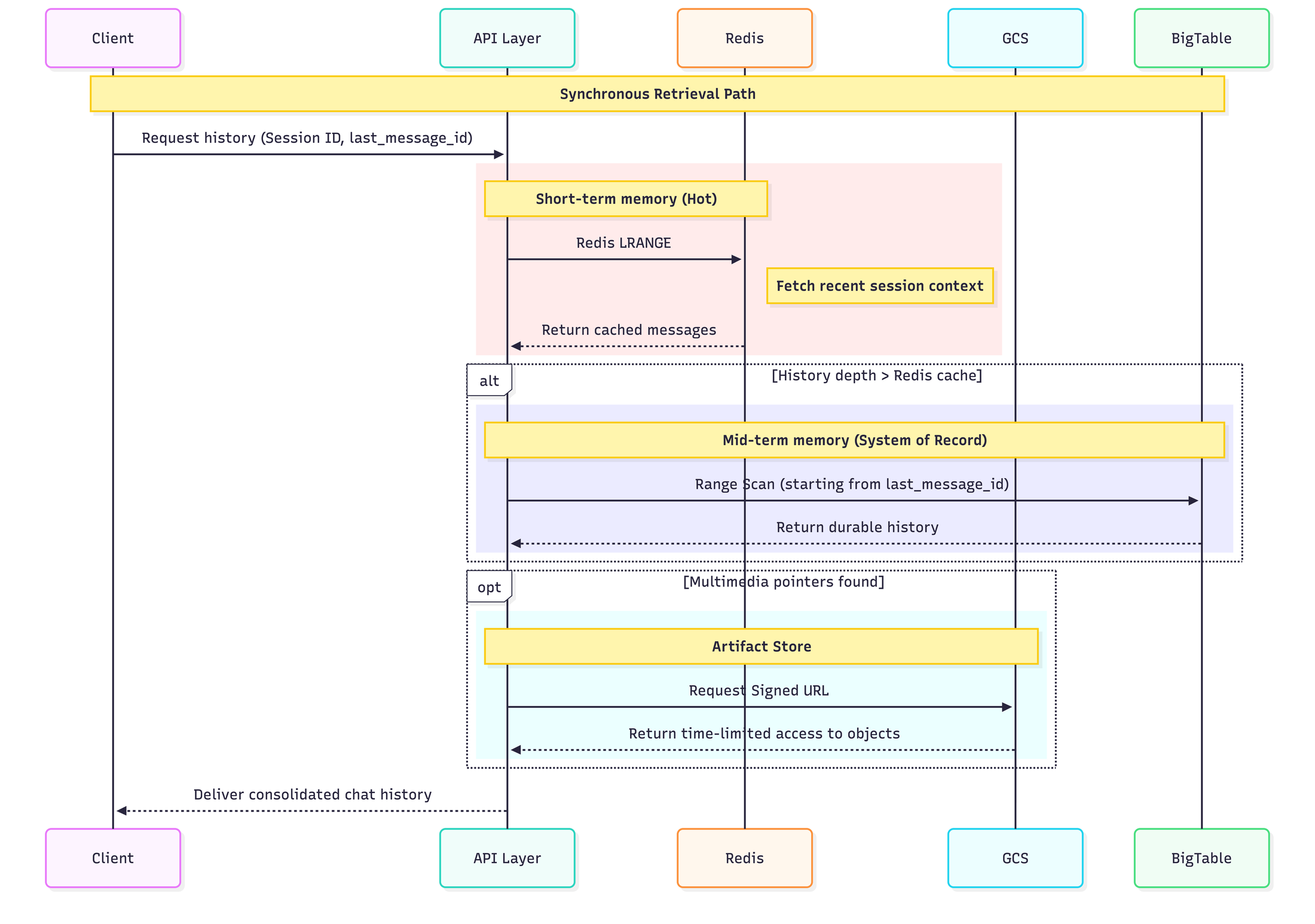

As shown in the sequence diagrams below, the hybrid sync-async strategy utilizes the abovementioned storage solutions to balance high-speed consistency with durable data persistence.

The diagram below shows how a user message and corresponding agent response traverse through the architecture:

The diagram below shows how data flows across the architecture when a user decides to retrieve chat history for a particular session:

Start building now

Ready to build an agent with a robust persistence layer?

- Build agents quickly: Start prototyping your agentic workflows on Vertex AI Agent Builder.

- Configure your cache: Determine which Memorystore for Redis configuration best suits your latency and availability needs.

- Design a robust BigTable schema: Review the schema design best practices.

- Bridge to analytics: Use the Bigtable change stream to BigQuery template to ready your live chat logs for actionable business insights.

- Bring data to life with analytics: Use Looker Conversational Analytics to drive product decisions through business intelligence.

Source Link: Give your agentic chatbots a fast and reliable long-term memory

Frequently Asked Questions

- Why use Memorystore for Redis if Bigtable is already fast?

Answer: Latency and cost. Redis provides sub-millisecond response times for “hot” chat context, which is essential for natural AI conversation flow. Bigtable is fast, but it is optimized for high-throughput writes and petabyte-scale storage rather than the ultra-low latency required for per-message retrieval.

- How do I prevent Bigtable from becoming too expensive as history grows?

Answer: Use Garbage Collection (GC) policies. You can set rules to automatically delete messages older than a specific age (e.g., 60 days) or keep only a fixed number of recent messages per session. This keeps your “Mid-term” memory lean and performant.

- What is the benefit of the user_id#session_id#reverse_timestamp row key?

Answer: It enables efficient range scans. Since Bigtable sorts keys lexicographically, a reverse timestamp ensures the most recent messages are stored first. This allows your app to pull the “last 10 messages” instantly without scanning the entire session history.

- Can I store images and audio directly in Bigtable?

Answer: No. Large binary objects (BLOBs) degrade performance in NoSQL databases. Use a Pointer Strategy: upload the file to Cloud Storage and store only the URI (e.g., gs://bucket/file.png) in Bigtable. Use Signed URLs to give the user temporary, secure access to the file.

- How does data move from the live chat (Bigtable) to analytics (BigQuery)?

Answer: Use the Bigtable Change Streams to BigQuery template. This managed Dataflow pipeline automatically captures every new message written to Bigtable and streams it into BigQuery in real-time, enabling immediate analytical insights without manual exports.

____________________________________________________________

This article was written from Google Cloud and originally published here.