Google Gemini: Everything you need to know about the new generative AI platform

Google’s trying to make waves with Gemini, a new generative AI platform that recently made its big debut. But while Gemini appears to be promising in a few aspects, it’s falling short in others. So what is Gemini? How can you use it? And how does it stack up to the competition?

To make it easier to keep up with the latest Gemini developments, we’ve put together this handy guide, which we’ll keep updated as new Gemini models and features are released.

What is Gemini?

Gemini is Google’s long-promised, next-gen generative AI model family, developed by Google’s AI research labs DeepMind and Google Research. It comes in three flavors:

- Gemini Ultra, the flagship Gemini model

- Gemini Pro, a “lite†Gemini model

- Gemini Nano, a smaller “distilled†model that runs on mobile devices like the Pixel 8 Pro

All Gemini models were trained to be “natively multimodal†— in other words, able to work with and use more than just text. They were pre-trained and fine-tuned on a variety of audio, images and videos, a large set of codebases and text in different languages.

That sets Gemini apart from models such as Google’s own large language model LaMDA, which was only trained on text data. LaMDA can’t understand or generate anything other than text (e.g. essays, email drafts and so on) — but that isn’t the case with Gemini models. Their ability to understand images, audio and other modalities is still limited, but it’s better than nothing.

What’s the difference between Bard and Gemini?

Image Credits:Â Google

Google, proving once again that it lacks a knack for branding, didn’t make it clear from the outset that Gemini is separate and distinct from Bard. Bard is simply an interface through which certain Gemini models can be accessed — think of it as an app or client for Gemini and other GenAI models. Gemini, on the other hand, is a family of models — not an app or front end. There’s no standalone Gemini experience, nor will there likely ever be. If you were to compare to OpenAI’s products, Bard corresponds to ChatGPT, OpenAI’s popular conversational AI app, and Gemini corresponds to the language model that powers it, which in ChatGPT’s case is GPT-3.5 or 4.

Incidentally, Gemini is also totally independent from Imagen-2, a text-to-image model that may or may not fit into the company’s overall AI strategy. Don’t worry, you’re not the only one confused by this!

What can Gemini do?

Because the Gemini models are multimodal, they can in theory perform a range of tasks, from transcribing speech to captioning images and videos to generating artwork. Few of these capabilities have reached the product stage yet (more on that later), but Google’s promising all of them — and more — at some point in the not-too-distant future.

Of course, it’s a bit hard to take the company at its word.

Google seriously under-delivered with the original Bard launch. And more recently it ruffled feathers with a video purporting to show Gemini’s capabilities that turned out to have been heavily doctored and was more or less aspirational. Gemini is, to the tech giant’s credit, available in some form today — but a rather limited form.

Still, assuming Google is being more or less truthful with its claims, here’s what the different tiers of Gemini models will be able to do once they’re released:

Gemini Ultra

Few people have gotten their hands on Gemini Ultra, the “foundation†model on which the others are built, so far — just a “select set†of customers across a handful of Google apps and services. That won’t change until sometime later this year, when Google’s largest model launches more broadly. Most info about Ultra has come from Google-led product demos, so it’s best taken with a grain of salt.

Google says that Gemini Ultra can be used to help with things like physics homework, solving problems step-by-step on a worksheet and pointing out possible mistakes in already filled-in answers. Gemini Ultra can also be applied to tasks such as identifying scientific papers relevant to a particular problem, Google says — extracting information from those papers and “updating†a chart from one by generating the formulas necessary to recreate the chart with more recent data.

Gemini Ultra technically supports image generation, as alluded to earlier. But that capability won’t make its way into the productized version of the model at launch, according to Google — perhaps because the mechanism is more complex than how apps such as ChatGPT generate images. Rather than feed prompts to an image generator (like DALL-E 3, in ChatGPT’s case), Gemini outputs images “natively†without an intermediary step.

Gemini Pro

Unlike Gemini Ultra, Gemini Pro is available publicly today. But confusingly, its capabilities depend on where it’s used.

Google says that in Bard, where Gemini Pro launched first in text-only form, the model is an improvement over LaMDA in its reasoning, planning and understanding capabilities. An independent study by Carnegie Mellon and BerriAI researchers found that Gemini Pro is indeed better than OpenAI’s GPT-3.5 at handling longer and more complex reasoning chains.

But the study also found that, like all large language models, Gemini Pro particularly struggles with math problems involving several digits, and users have found plenty of examples of bad reasoning and mistakes. It made plenty of factual errors for simple queries like who won the latest Oscars. Google has promised improvements, but it’s not clear when they’ll arrive.

Gemini Pro is also available via API in Vertex AI, Google’s fully managed AI developer platform, which accepts text as input and generates text as output. An additional endpoint, Gemini Pro Vision, can process text and imagery — including photos and video — and output text along the lines of OpenAI’s GPT-4 with Vision model.

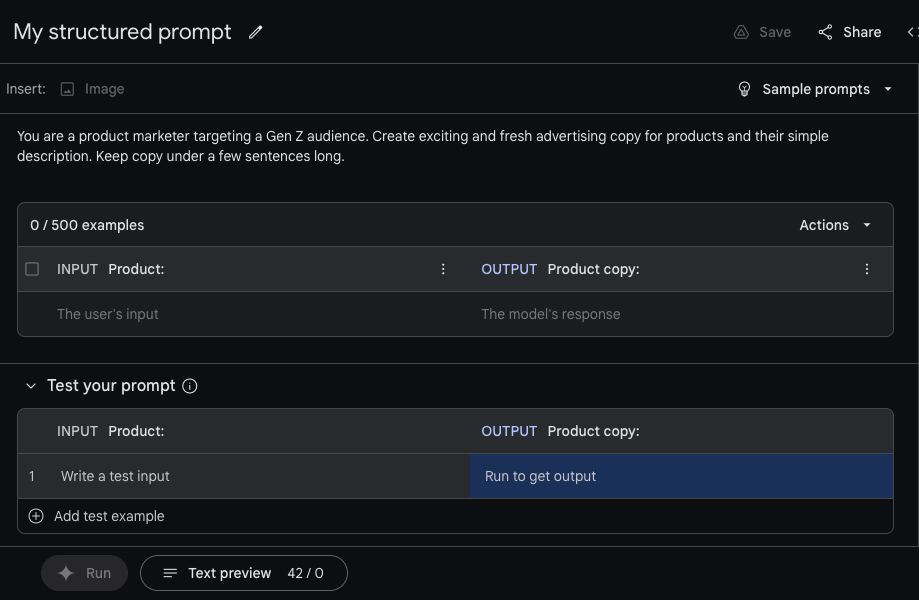

Using Gemini Pro in Vertex AI. Image Credits: Gemini

Within Vertex AI, developers can customize Gemini Pro to specific contexts and use cases using a fine-tuning or “grounding†process. Gemini Pro can also be connected to external, third-party APIs to perform particular actions.